Your organization has an AI policy. The hard part is making it real in the daily moments where people use AI to get work done.

Most teams try to solve this with an acceptable use policy document and a training session. That helps, but it does not control what happens when someone is staring at an empty prompt box and trying to move fast. Awareness without controls cannot effectively mitigate human risk.

This is why we’re excited to announce Amplifier Safe AI Usage, a solution built on the Amplifier platform. It combines the training you need to build awareness with in-the-moment guardrails so AI policy gets applied consistently across everyday GenAI use.

The problem: policy exists, behavior drifts

Every organization is asking the same question. How do we let employees use generative AI without leaking sensitive data, violating IP rules, or creating compliance risk?

Policies and annual training are necessary. They are not sufficient.

People do not remember the policy when they are pasting code into a chatbot, summarizing a customer contract, or rewriting a board update. They default to convenience. That is how “approved guidance” turns into “shadow AI.”

Safe AI Usage closes the gap between policy, intent, and behavior.

Safe AI Usage in four moves: Train, Coach, Harden, Shield

1) Train: baseline awareness that is short and role-relevant

Safe AI Usage includes a short training module that covers what employees actually need to know about using AI at work.

Concise training that focuses on real risks: proprietary code, internal documents, customer data, credentials, and regulated data

Assignable by role, group, or risk level

Trackable for audit readiness

Automated reminders and escalations in the tools employees already use, like Slack or Microsoft Teams

This gives you baseline compliance. It also sets up the next step, because baseline awareness works best when it is reinforced in the moment.

2) Coach: policy guidance that shows up inside the tool

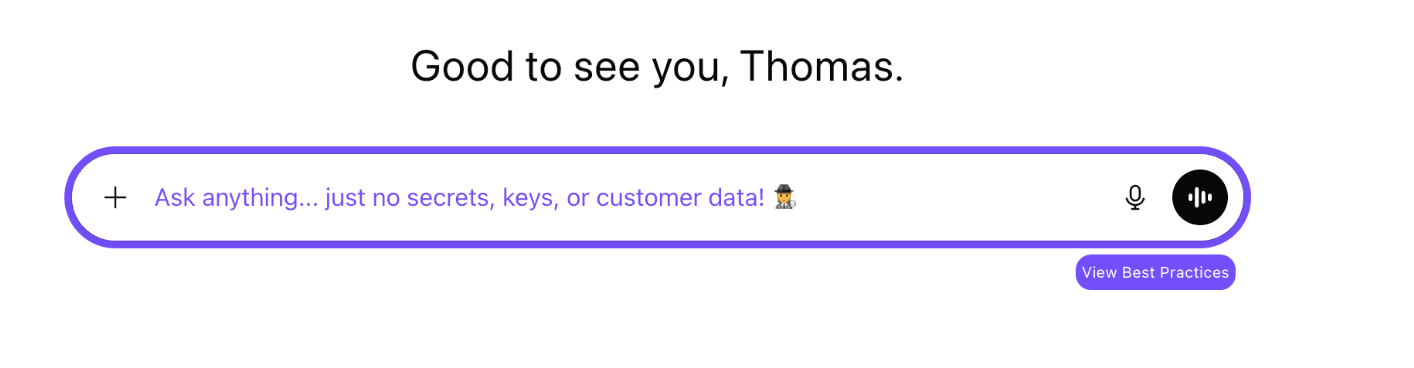

Safe AI Usage delivers coaching at the point of decision, inside the AI services employees are already using.

This is made possible through the Amplifier browser extension. It runs quietly until coaching matters, then delivers clear guidance without forcing employees to switch tabs or hunt down a policy page.

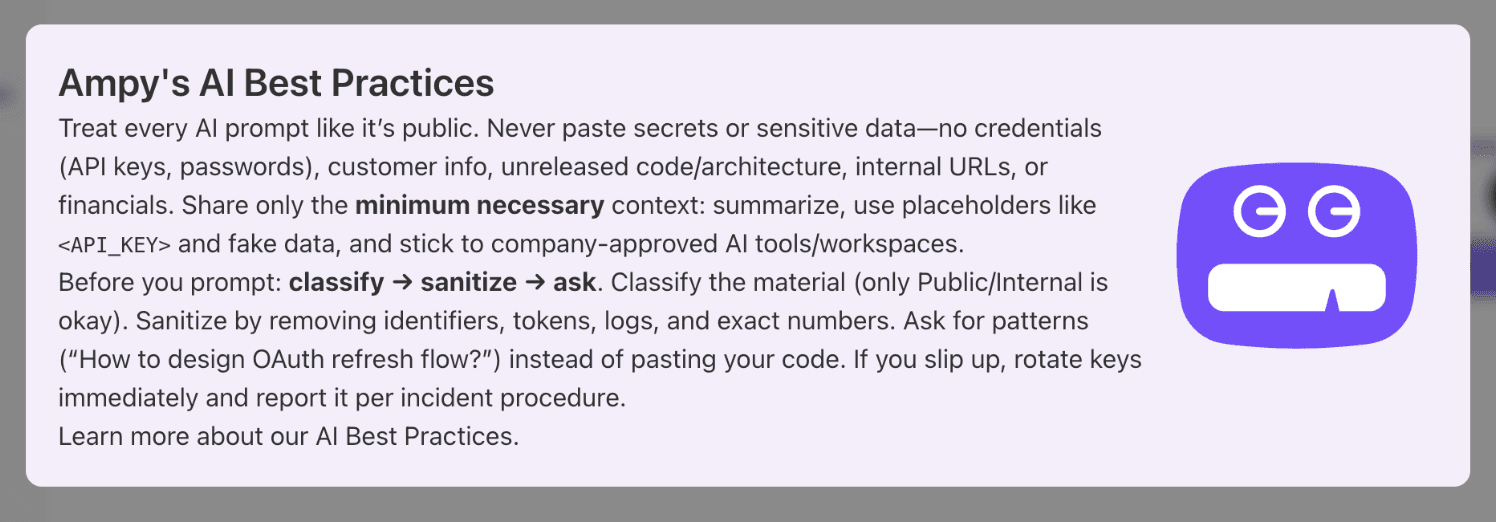

When someone opens a GenAI service, Safe AI Usage can provide two layers of coaching:

Inline prompt reminder: a lightweight nudge embedded directly in the prompt input field, so the guidance stays visible during the moment of action

Best-practices overlay: your organization’s approved guidance, tailored to the service and policy category

This is not generic advice. Admins define the policy guidance, and Amplifier delivers it when and where it matters.

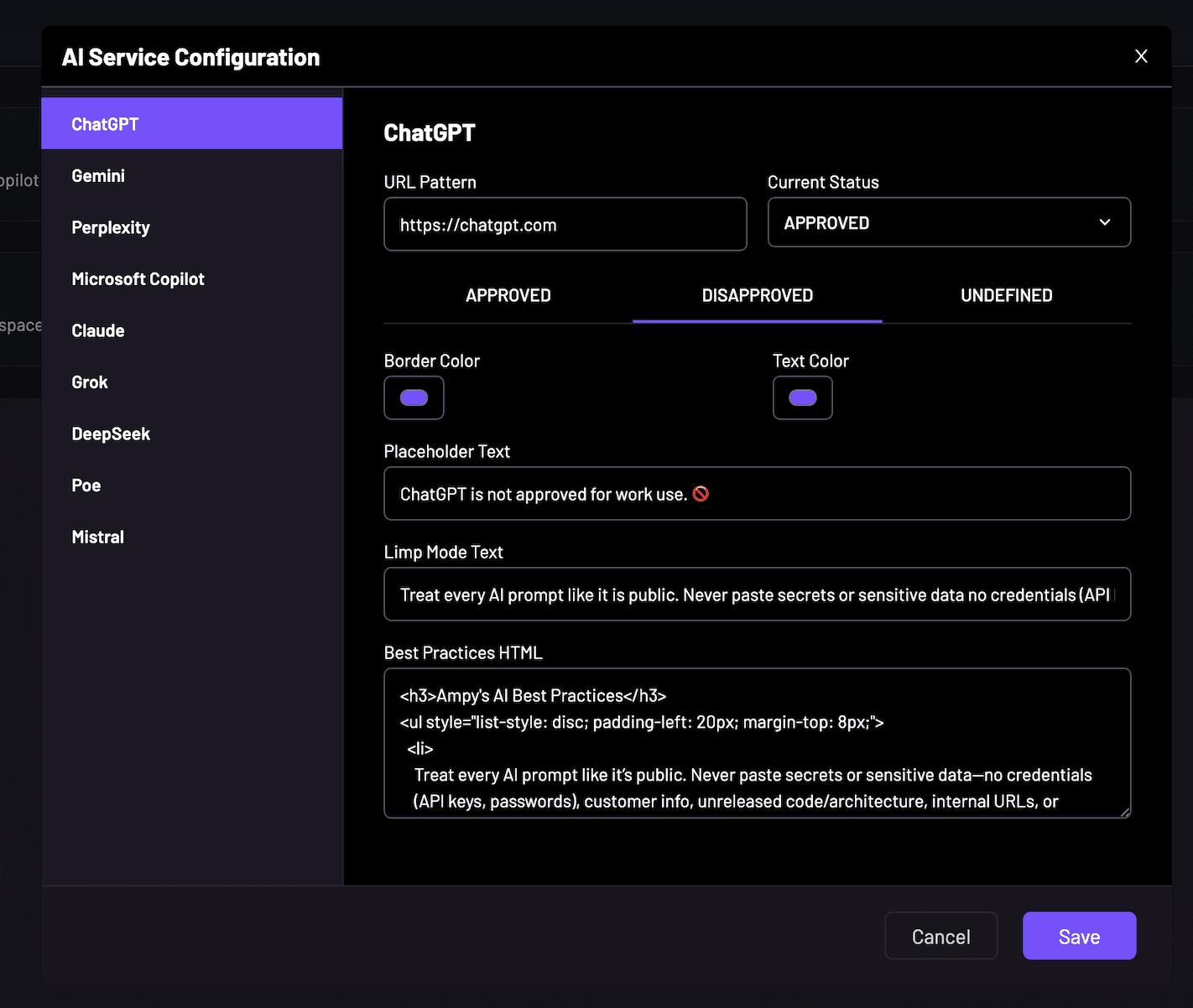

3) Harden: reduce unapproved AI tools and services

Hardening is about shrinking the problem space. If employees have dozens of AI tools within reach, policy compliance becomes a constant uphill battle.

Safe AI Usage helps reduce unapproved AI exposure across the employee work surface by turning discovery into governance.

Automatic discovery of AI services in use: identify the AI tools employees are actually accessing, including newly emerging services

Service classification: quickly categorize tools as Approved, Unapproved, or Undefined based on your governance model

Guided steering away from unapproved services: when someone lands in an unapproved tool, they get a clear warning plus direction to approved alternatives

Policy coverage that keeps pace: as new tools appear, admins can classify and deploy coaching fast, reducing the window where shadow AI grows unchecked

The result is fewer unapproved tools in daily circulation, less ambiguity for employees, and a smaller surface area for AI policy violations.

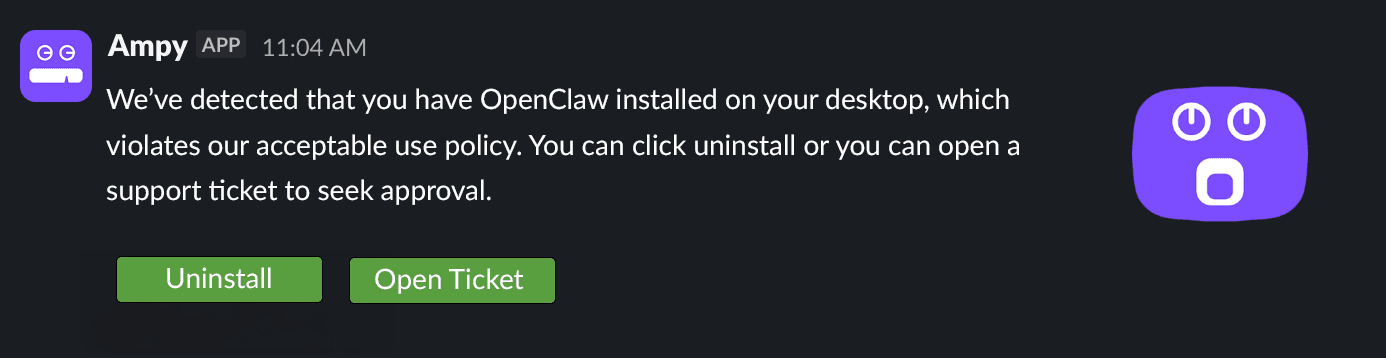

4) Shield: corrective actions guided by Amplifier

Shielding is what happens after risk shows up. You need more than visibility. You need a system that drives corrective actions until issues are closed.

Safe AI Usage turns AI policy violations and risky patterns from unintentional insider threat actors into structured follow-through inside Amplifier.

Signals become findings: usage signals and policy breaches can generate findings when thresholds are exceeded (for example, repeat use of unapproved tools)

Guided interventions: Amplifier triggers corrective actions such as targeted coaching, reminders, and escalations based on risk level and behavior

Role-aware follow-up: corrective actions can reflect the employee’s role and context, so guidance is specific enough to drive change

Closed-loop outcomes: the platform tracks whether interventions worked and whether behavior shifted, so you can prove the policy is being applied in practice

This is the difference between “we saw the problem” and “the problem got fixed.”

The bottom line

The organizations that will adopt GenAI safely will not be the ones with the best-written policy. They will be the ones that put policy into practice at scale.

Safe AI Usage does that by combining:

Training for baseline compliance

Coaching in the flow of work

Hardening by reducing unapproved AI tools

Shielding through guided corrective actions that close the loop

Safe AI Usage is available today for early-access customers. To learn more or request access, visit amplifiersecurity.com or contact your Amplifier team.

FAQ

How do you go beyond AI policy and training to stop shadow AI?

Policies and training are necessary but not sufficient, as awareness without controls cannot effectively mitigate human risk. Solutions like Amplifier Safe AI Usage close the gap using in-the-moment guardrails. This includes Coaching (policy guidance inside the tool), Hardening (reducing unapproved AI tools), and Shielding (guided corrective actions at the moment-of-risk). This approach applies your policy consistently across everyday GenAI use, stopping "approved guidance" from turning into "shadow AI."

Why is security awareness training for GenAI acceptable use important?

Training is essential to build awareness and establish baseline compliance for your workforce. A short awareness video that focuses on real risks like leakage of proprietary code, customer data, and credentials builds general policy awareness and prepares learners for the step toward Safe AI Usage. The training is also trackable for audit readiness. This baseline awareness is the foundation, and it works best when it is reinforced in the moment by Safe AI Usage coaching.

Does in-the-moment AI coaching reduce employee productivity?

No. Amplifier Safe AI Usage delivers coaching at the point of decision, inside the AI services employees are already using, without forcing them to switch context. It uses the Amplifier browser extension, which runs quietly in the background until coaching is needed. It provides two non-disruptive layers: a best-practices overlay and an inline prompt reminder that acts as a lightweight nudge embedded in the prompt input field. This keeps the policy visible during the moment of action without introducing nudge fatigue.

Why is role-aware AI coaching important for security and compliance?

Generic guidance gets ignored, but context-specific guidance is more likely to be read. Role-aware follow-up within Amplifier Safe AI Usage ensures corrective actions reflect the employee’s role and context. This means guidance is specific enough to drive real change and is not dismissed as noise. This targeted approach is vital for security and compliance, ensuring that policy is applied effectively to an engineer's proprietary code concerns versus an HR professional's employee data concerns.

How can we track and prove AI policy compliance to auditors?

The shielding phase offered through the Amplifier solution is key, as it provides closed-loop outcomes. Usage signals and policy breaches generate findings when thresholds are exceeded. Amplifier then tracks whether the resulting guided interventions worked and whether employee behavior shifted. Additionally, the baseline training module is trackable for audit readiness. This system allows you to definitively prove to auditors that your policy is being applied in practice and that risks are being managed.