Shadow AI is often framed as a failure of technology, management, and communication, the result of strict policy and poor process. But if you look closer at the 2026 workplace, you’ll find that shadow AI often has a much different source: career anxiety.

With shadow IT, people often use unauthorized software out of some combination of convenience and preference. Getting IT approval takes too long or they need to use a different software to collaborate with a contractor or partner. While those motivations exist with shadow AI, career anxiety is also a big driver. In an era of mass layoffs, new AI tools and technology represent a significant opportunity for employees to increase their productivity and advance their careers – and security policy and repercussions may not be top of mind.

They are using their personal ChatGPT accounts to ask questions, looking to close skill and knowledge gaps in real time. They are secretly creating agents with Claude Code that skyrocket their output, and when promotion conversations come around, they are letting the results speak for themselves. This has created a sprawling phantom workforce, silently accessing and sharing sensitive files and systems without anyone truly understanding the full extent.

For security and IT teams, this new motivator is an important distinction for two reasons:

When people believe using a set of tools can help their careers, it accelerates the spread of shadow AI dramatically – especially when the tool is free or only costs $20/month.

If the use of unauthorized AI offers a competitive advantage, employees may be less forthcoming about which tools they’re using. This makes trying to find and remediate shadow AI more difficult.

This article examines shadow AI, highlighting critical risks and mitigation strategies. Discover how you can use a mix of governance policies, automation tools, and a friendly AI Security Engineer to manage shadow AI risks without disrupting workflows and undermining productivity gains.

What Exactly is Shadow AI?

Shadow AI is the use of generative AI tools by employees without IT approval or oversight. Common culprits include ChatGPT, Claude, Gemini, GitHub Copilot, and multiple specialized AI assistants for everything from meeting transcriptions to data analysis.

The risks come from a lack of visibility and governance, not from the tools themselves. The word “shadow” highlights that these tools are used outside authorized channels, even if some unsanctioned tools are not inherently insecure. This is especially dangerous with AI because personal accounts are often free, with the caveat that they share user inputs with the training model and expose anything uploaded or pasted to the rest of the world.

Examples of Shadow AI

Common examples of shadow AI include AI code assistants, LLMs such as ChatGPT to automate tasks like text editing, marketing and sales automation tools, and data analysis and visualization tools.

AI Code Assistants

Your developers may use tools like GitHub Copilot or other AI coding assistants without IT approval. While these tools boost productivity, developers might inadvertently paste proprietary code, internal APIs, or sensitive business logic into these platforms, exposing intellectual property and creating security vulnerabilities.

AI-Powered Meeting Transcription Tools

Employees often use unauthorized AI transcription services like Otter.ai or Fireflies.ai to record and summarize meetings. These tools improve your team’s efficiency; however, confidential discussions, sensitive client information, and proprietary data move behind the company firewall into external servers without proper security controls or data residency compliance.

Marketing Automation

Marketing teams might use unauthorized AI-driven platforms or AI-powered email optimization tools to personalize campaigns and improve engagement rates. The absence of IT oversight and governance can lead to the mishandling of customer data and noncompliance with regulations such as GDPR and CCPA.

Five Critical Shadow AI Risks Affecting Your Security Posture

From 2024 to 2025, the adoption of generative AI applications by enterprise employees grew from 65% to 79%. Alongside this growth came a rise in shadow AI posing risks such as data breaches, expanded attack surface, compliance violations, loss of competitive advantage, and reputational damage.

Data Breaches

When employees share information with an unsanctioned LLM through a personal account, they may be creating permanent records outside their company’s control. Everything employees paste or upload, whether it’s an email they want help drafting or a meeting transcript they want summarized, may be retained by the provider and, depending on the tool, account type, and settings, could be used to improve the model or otherwise exist outside the company’s control, creating a meaningful risk of data leakage. While training can often be disabled with corporate and some paid personal accounts, the reality is that most employees going rogue are using free versions with default settings.

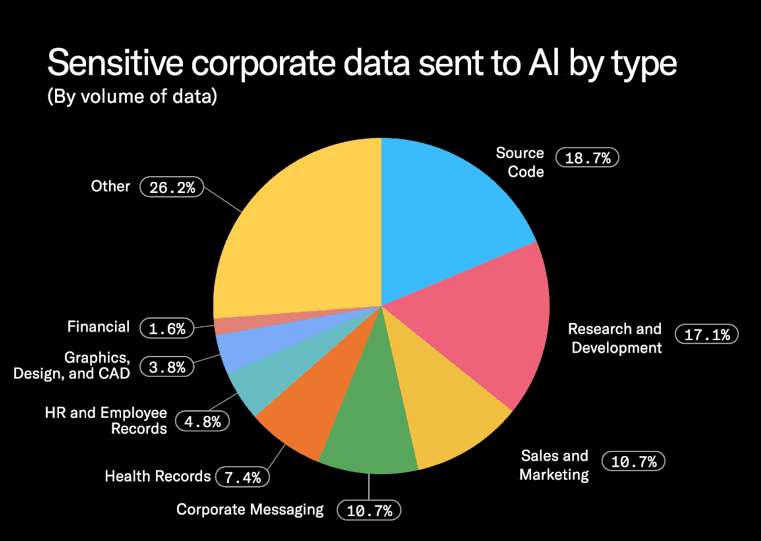

34% of all data that employees input into AI tools is classified as sensitive, which is a substantial increase from 10.7% observed two years ago. Unlike IT approved applications that let you maintain oversight, you have no control over data entered into the void of shadow AI. So that code your developer used Claude to debug? Voila, it’s now effectively “open source” for others – including your competitors.

Expanded Attack Surface and Security Vulnerabilities

Shadow AI doesn't just expose sensitive data; it creates new attack vectors. The risk multiplies with every new integration, and each connection expands the attack surface. Some common attack surface expansions include:

Insecure API calls between AI applications and corporate systems

Data exfiltration via AI browser extensions that access local storage or session cookies

Shadow workflows that bypass existing access controls

ChatGPT enterprise connectors to Google Drive, HubSpot, and Box are typically activated by individual users, not by IT. It reflects serious security risks due to the IT department's lack of visibility. Without visibility, your security teams cannot assess AI tools for encryption standards, retention policies, threat models, or vendor risk posture.

Employees creating personal AI accounts for corporate use often skip two-factor authentication, and the lack of enterprise security controls, such as authentication, encryption, or security monitoring, further increasing security risks. Corporate credentials stored in unmonitored shadow AI tools for "personalization" introduce weaknesses that attackers can leverage to launch sophisticated social engineering attacks.

Compliance and Regulatory Violations

Shadow AI can create regulatory and legal issues because the tools have not been vetted for compliance. Consider the regulatory exposure: GDPR violations when AI tools process EU citizen data without a proper legal basis, HIPAA breaches when healthcare workers enter patient information into unapproved chatbots.

The audit trail problem compounds the risk. Traditional applications maintain audit logs, which help you track data access and usage. However, shadow AI tools operating outside your security perimeter create zero visibility.

Shadow AI creates an accountability gap that can lead to serious business repercussions. Whom do you hold accountable for the data breach? Whether it is an employee who used the tool or a department head who didn’t provide approved alternatives. Will you hold your security team or CISO accountable for failing to detect and block the tool?

Loss of Competitive Advantage

Shadow AI fundamentally compromises a company's intellectual property, often turning confidential assets into general knowledge. When employees input proprietary data, unique source code, or innovative business methodologies into public, unauthorized LLMs, that information is often retained and used to train the AI model. This essentially converts the organization's unique competitive differentiators into a generalized data set, making them accessible or replicable by competitors who use the same tools. The result is the silent erosion of market advantage, as the intellectual property and trade secrets that once provided a unique edge are lost to the public domain, directly impacting long-term innovation and revenue potential.

Reputational Damage

AI-related security incidents quickly gain prominence and are closely scrutinized by multiple stakeholders. When these incidents surface, they raise serious questions about an organization's ability to safeguard critical information. Even a minor disclosure, such as an employee pasting confidential data into a public AI model, can trigger negative coverage and spark concerns about weak internal controls.

Additionally, unauthorized tools-generated content might provide biased or inappropriate information, damaging organizational credibility and customer trust.

Security Controls and Practices for Mitigating Shadow AI Risks

Emerging MCP (Model Context Protocol) allows AI models to interact directly with your internal systems. They create attack vectors that traditional security tools were not designed to detect.

Effective shadow AI risk mitigation requires a human-centric approach that blends visibility, enablement, and secure workflows. The mitigation efforts are more than blocking domains or creating stricter policies. Some of the most effective strategies you can use to mitigate the risks of shadow AI are.

Gain Visibility into Shadow AI Usage

You can't manage what you can't see. Start by discovering what AI tools are actually being used across your organization. Identify all AI tools, models, extensions, and APIs currently in use across various departments. A detailed inventory of shadow AI tools within your organization is foundational for governance.

You can create an inventory template using the sample criteria mentioned in the table below. You can add or remove criteria based on your business-specific requirements. Conduct an assessment using the template to gain visibility into shadow AI usage within your organization. Identify the tools and the sensitive data they access to enable you to prioritize your response, as not all shadow AI carries equal risk.

Criteria | Description | Examples / Indicators |

Department | The business function using the AI tool. Identify which team is using the tool and for what purpose. | Engineering, Marketing, Sales, HR, Finance, Customer Support, Product, Operations |

AI Tool Name | The specific AI application, extension, model, or API. Capture both officially sanctioned and shadow AI tools. | ChatGPT, Gemini, Jasper, GitHub Copilot, Perplexity, AI Chrome extensions |

Type of AI Tool | Categorize the tool based on functionality. Helps identify tool purpose and associated risks. | Code assistant, writing assistant, data analysis tool, automation agent, transcription, summarization |

Use Case / Purpose | Why is the team using the tool? | Drafting emails, analyzing spreadsheets, generating code, summarizing meetings |

Data Access Level | Types of data the tool interacts with. Classify data into sensitivity categories. | Public, internal, confidential, PII, PHI, financial, source code |

Sensitive Data Exposure Risk | Whether the tool accesses or stores sensitive data. Evaluate based on tool behavior and employee input. | Uploading customer data into ChatGPT, copying source code into AI debuggers |

Vendor Security Posture | Review the security controls of the AI vendor. Assess certifications, BAAs, SOC 2, GDPR, encryption, data handling. | SOC 2 Type II, GDPR compliant, unknown vendor, no BAA |

Access Permissions Required | What the tool needs to function. Identify high-risk plugins or extensions. | Reads browser data, accesses clipboard, integrates with email/calendar |

Authentication Method | How employees log into the tool. | Personal email, corporate SSO, shared accounts |

Tool-Level Risk Score | Combined risk based on sensitivity, exposure, vendor quality, access. Can be numeric or High/Medium/Low. | High (interacts with PII + unknown vendor), Low (internal LLM) |

User Volume | Number of employees using the tool. Helps prioritize enforcement or enablement. | 3 engineers, 12 marketers, 50 sales reps |

Approval Status | Whether the tool is sanctioned or shadow AI. Determine governance gaps. | Approved, Pending Review, Not Approved, Unknown |

Conduct routine audits to identify shadow AI tools and assess their data security risks. These audits will provide valuable insights to update the sanctioned AI apps lists and refine governance.

Prioritize Remediation Efforts Based on Shadow AI Risk Factors

Assess each shadow AI application based on two factors: its security posture and the sensitivity of the data it can access. Security posture should account for controls such as encryption, MFA, audit logging, and security attestations. Data exposure should reflect the likelihood that employees are sharing confidential, regulated, or proprietary information through the application. Based on these factors, classify shadow AI applications into high-, medium-, and low-risk categories, then prioritize remediation actions accordingly. High-risk applications should be addressed first, especially when weak security controls are combined with access to sensitive company data.

Define Clear AI Governance Policies

Create an approved AI tool list with clear criteria for what makes a tool acceptable. Define guidelines on which types of AI systems can be used for different work scenarios and how sensitive information should be handled. This provides employees with clarity while ensuring security teams retain control.

Establish a process for quickly evaluating new tools so when employees request something, they get a decision in days, not months. A clearly defined governance framework can accommodate the fast-paced nature of AI adoption while maintaining security measures.

Implement Safe AI Usage Guardrails

Implementing guardrails around AI usage creates a safety net, reinforcing policy by guiding safe use of approved tools and reporting transgressions in a timely manner.

Implement technical controls to restrict unauthorized use of AI tools and automatically block high-risk applications without manual intervention. Data loss prevention (DLP) rules can help you detect when employees attempt to share sensitive information with unauthorized AI platforms and intervene in real-time. You can implement browser-based controls and API monitoring to gain visibility into AI tool usage across your environment, even for non-SSO applications.

These guardrails work inconspicuously in the background, preventing risky data exposure while allowing employees to work productively within safe boundaries.

The Human Factor: Reinforcing Best Practices and Taming Rogue AI Use In Real Time

Better visibility, tighter vetting, clearer policies, and stricter guardrails help control AI risk. But they don’t fully address the human factor: the employee deciding to use the unsanctioned AI tool. Dozens of new LLMs and AI tools are released every week, and most are free to some capacity. For the wide-eyed, overeager employee excited to find a productivity hack, the temptation is big and the pressure to move around restrictive policy to get work done is even bigger.

Moment-of-risk interventions can play a huge role in reshaping employee behavior and reinforcing security education. And this is where a human risk management platform like Amplifier and an AI Security Engineer like Ampy can help busy IT and Security teams fill the gap with real-time shadow AI education and remediation.

After integrating the Amplifier platform with your security stack, Ampy can trigger a mix of prebuilt and custom workflows that make up our Safe AI solution. Safe AI closes the gap between policy, intent, and behavior in four distinct ways:

Training: Educating users on shadow AI risks with trackable training modules and automated reminders.

Coaching: Delivering best practices within the app and as overlays to reinforce training, offering reminders not to paste customer data or share sensitive keys.

Hardening: Reducing the attack surface by discovering and classifying AI tools in use and popping up warnings when users open unapproved tools.

Shielding: Driving corrective action that remediates active risk, like generating security warnings on repeat usage or assigning targeted coaching.

AI Security Engineer in Action

Ampy is an AI Security Engineer, designed to engage employees where they work – over communication platforms like Slack and Teams, via email and even in browser – and provide education, reinforce secure habits, and streamline remediation processes.

For example, when endpoint telemetry data shows an employee connecting to an unauthorized AI app, Ampy can share a list of approved AI apps in real time. It can also guide the employee through the process of removing the app or requesting an exception – no trying to remember which portal to log into or filling out tickets manually.

Ampy can also deliver micro lessons and security reminders when it detects risky behavior. For example, if it detects an employee pasting something into an AI tool, it could drop a reminder about the risks of sharing data with LLMs or even share a micro-lesson from an integrated SAT tool. Since Ampy is conversational, employees are also free to ask questions and dig deeper, helping them understand why certain tools are permitted and others aren’t.

Another benefit of Ampy is that it can effectively act as a friendly buffer between the employee and the security team or their manager. Giving employees the ability to self remediate through the AI eases the sense of conflict that might emerge from being “found out”. This is especially true since Ampy engages in real-time, not days or weeks later, minimizing the amount of time invested into the shadow AI tool and the fallout of sensitive data being exposed.

Building Good Habits for the Shadow AI We Can’t See

Part of what makes shadow AI so difficult to monitor is the low barrier to entry. Unfortunately, that means that if employees truly want to conceal the use of AI, they could simply just use an account from a personal device that Security teams have no visibility into.

That’s why behavioral reinforcement via an AI Security Engineer like Ampy is so critical to taming shadow AI risk. When an employee closes their corporate laptop and opens a personal tablet, the firewall becomes irrelevant. But the consistent nudges, the micro trainings, and the questions that were answered? That’s muscle memory that helps instill security even when employees are operating outside of the firewall.

Don't Let Shadow AI Trigger Security Breach

It's time to act now to prevent shadow AI from introducing vulnerabilities and triggering security breaches. You need to recognize shadow AI as a signal about what employees need to be productive and respond with security that enables rather than blocks. Restrictive AI policies are not practical, and it might not be feasible for you to eliminate all instances of shadow AI.

Implementing AI-powered human risk management platforms like Amplifier Security enable you to contain these risks. An AI Security Engineer like Ampy can engage your employees at the point of risk, channeling rogue action into guided innovation. AI also helps instill true behavioral change and creates a blame-free security culture, converting people who engage in shadow AI – and even try to conceal it – into your strongest security allies.

FAQ

What are shadow AI platforms in an enterprise context?

Shadow AI platforms are AI tools, agents, or services used inside an organization without formal security approval or governance. They include public LLMs, embedded AI APIs, developer copilots, and autonomous agents. The risk comes from unmanaged identities and uncontrolled data flow. Over time, these systems accumulate access and permissions that security teams may not see.

How can we detect shadow AI without disrupting the business?

You detect shadow AI effectively by starting with passive discovery before enforcing blocking controls. Browser and network monitoring tools provide broad visibility, while identity and developer-layer tools add depth. Gradual rollout reduces operational friction. Clear communication prevents backlash from business teams.

Should we block all unauthorized AI tools immediately?

Blocking all unauthorized AI tools immediately often creates more risk through workarounds. Employees shift to personal devices or external networks where all visibility is lost. A visibility-first approach with coaching and guardrails can prevent rogue actions. Security training and education, delivered by AI Security Engineers at the moment of risk and across everyday use, also encourages safer practices if employees do use shadow AI.

How do we measure progress in managing shadow AI?

Progress is measured by visibility coverage, governed identity ratios, and reduction in high-risk data flows. Tracking the percentage of AI agents with assigned owners is useful. Monitoring reduction in unsanctioned API calls also indicates maturity. Absolute elimination is unrealistic.